Last year, Meta introduced a groundbreaking machine learning tool named Segment Anything, capable of swiftly and precisely segmenting nearly any object within an image. At this year’s SIGGRAPH conference, CEO Mark Zuckerberg unveiled its successor, which extends these capabilities to videos, showcasing the rapid development within this field.

The process of segmentation involves a vision model analyzing an image and identifying its components, distinguishing elements like a dog from a tree, rather than mistakenly combining them. This technique has been refined over decades, becoming significantly more effective and quicker, with Segment Anything marking a significant advancement.

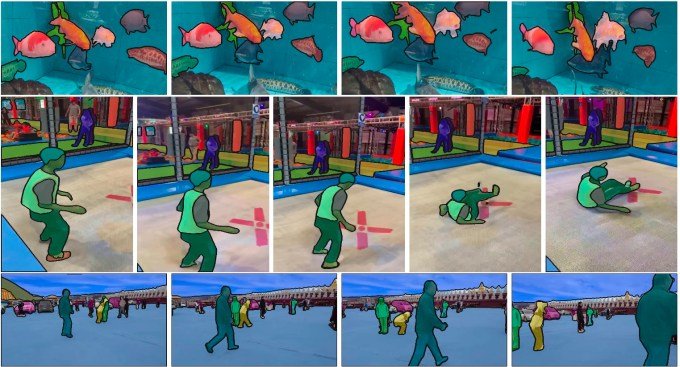

Segment Anything 2 (SA2), the next iteration, adeptly handles video content, offering a more streamlined approach compared to processing individual frames with the initial model.

Zuckerberg shared insights during a discussion with Nvidia CEO Jensen Huang, mentioning how this technology is employed in scientific research to analyze coral reefs and natural environments, emphasizing its innovative zero-shot video segmentation capabilities.

Video processing demands significantly more computing power, but advances in the field have enabled SA2 to operate effectively without overwhelming data center resources. This achievement speaks volumes about the progress in making fast and versatile segmentation a reality today, compared to its infeasibility just a year prior.

Meta plans to keep the model openly accessible for use, without currently offering a hosted variant, although a free demo is available.

The development of such a model requires an extensive amount of data. Meta is releasing a vast labeled video database consisting of 50,000 videos specifically created for this project. In addition, the paper on SA2 mentions the use of another collection of more than 100,000 videos for training purposes, which will remain private. The specifics of this dataset and reasons for its restricted access are being pursued with Meta for clarification.

In recent years, Meta has positioned itself as a frontrunner in the open AI space, a strategy that extends beyond its present offerings to include tools such as PyTorch. The availability of models like LLaMa and Segment Anything has set new standards for AI performance, though their openness is occasionally contested.

Zuckerberg acknowledged that their approach to openness is not purely altruistic but strategic, aimed at fostering an ecosystem that enhances the value of their developments:

“We’re not pursuing open source because of altruism, though it does benefit the ecosystem greatly. Our motivation lies in creating an environment that ensures what we’re developing reaches its highest potential,” he explained.

Regardless of the motivation, the impact is clear. Those interested can explore the GitHub repository here.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence