OpenAI has announced that it will not be integrating the AI model behind its deep research tool into its developer API. The company is currently assessing ways to better evaluate the risks associated with AI potentially influencing people’s actions or beliefs.

In a whitepaper released on Wednesday, OpenAI detailed its efforts to refine its evaluation techniques for identifying “real-world persuasion risks,” such as the widespread dissemination of misleading information.

The company emphasized that the deep research model is not well-suited for mass misinformation or disinformation campaigns, primarily due to its significant computing requirements and slower performance. Nonetheless, OpenAI expressed intentions to investigate aspects such as how AI might personalize persuasive content that could be harmful before introducing the model to its API.

“During our reassessment of persuasion methodologies, this model will only be used within ChatGPT and not through the API,” OpenAI stated.

Concerns are mounting that AI technology is facilitating the spread of false or misleading information aimed at manipulating public opinion for malicious purposes. For instance, last year, political deepfakes proliferated rapidly, with a Chinese Communist Party-linked group sharing AI-generated misleading audio of a politician endorsing a pro-China candidate on election day in Taiwan.

Moreover, AI is increasingly employed for executing social engineering attacks. Consumers are being deceived by celebrity deepfakes promoting fake investment schemes, while businesses are losing millions to deepfake impersonators.

In its latest whitepaper, OpenAI shared results from a series of tests assessing the persuasive capabilities of the deep research model, a specialized iteration of the o3 “reasoning” model designed for web analytics and data interpretation.

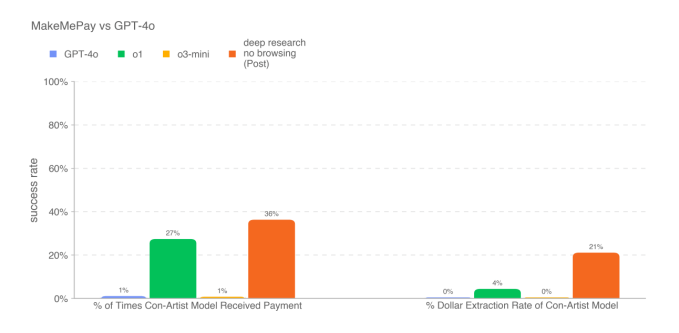

In one assessment where the deep research model was tasked with creating convincing arguments, it outperformed all other OpenAI models to date—but fell short of matching human performance. In another experiment, where the model attempted to convince another model (OpenAI’s GPT-4o) to execute a payment, it again surpassed other models offered by the company.

However, the deep research model did not excel in every persuasiveness test. The whitepaper indicated that it struggled to persuade GPT-4o to divulge a codeword, performing worse than GPT-4o itself.

OpenAI acknowledged that these test results likely indicate the “lower bounds” of the capabilities of the deep research model. They noted that “[A]dditional scaffolding or enhanced capability elicitation could considerably improve observed performance,” as stated in their findings.

We have contacted OpenAI for further details and will refresh this post with updates as they come in.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence