Hello, everyone! Welcome back to TechCrunch’s regular AI newsletter. If you’d like to receive this straight to your inbox every Wednesday, sign up here.

You may have noticed that there was no newsletter last week. The reason behind this absence? An overwhelming influx of AI news that reached a fever pitch due to the rapid ascent of Chinese AI company DeepSeek, triggering reactions from just about every sector of both industry and government.

Fortunately, we’re back in gear — and just in time given the significant developments over the past weekend from OpenAI.

OpenAI’s CEO, Sam Altman, made a stop in Tokyo for a conversation on stage with Masayoshi Son, the CEO of SoftBank, a key investor in OpenAI. SoftBank has pledged its support for OpenAI’s substantial data center project in the U.S., contributing substantially to its infrastructure.

Given this investment, it’s likely that Altman felt compelled to spend some time with Son.

What topics were discussed between these two billionaire leaders? They covered the potential of relying on AI “agents” to automate tasks, according to secondhand reports. Son announced that SoftBank plans to invest $3 billion annually in OpenAI’s products and collaborate on a platform named “Cristal Intelligence” aimed at automating millions of white-collar tasks.

“By autonomizing and automating all its engagements, SoftBank Corp. will reshape its operations and generate new value,” explained SoftBank in a press release issued Monday.

However, I ponder what this means for the everyday worker amidst this wave of automation and autonomy.

Much like Sebastian Siemiatkowski, CEO of Klarna, who often speaks of AI supplanting human roles, Son appears to believe that AI stand-ins for labor will create extraordinary wealth. The implications of this shift, particularly for job displacement, seem to be overlooked. Should this widespread automation materialize, massive unemployment may be an inevitable outcome.

It’s disheartening that pioneering firms in AI — such as OpenAI and investors like SoftBank — focus their press narratives on creating automated enterprises with fewer employees, reflecting their nature as profit-driven businesses rather than philanthropic organizations. While the development of AI is undeniably expensive, perhaps public trust would improve if those leading its deployment exhibited greater concern for the people affected by their innovations.

This certainly warrants contemplation.

Latest News

Deep Research: OpenAI has unveiled a new AI “agent” intended to assist individuals in performing detailed and intricate research through ChatGPT, its AI-driven chatbot.

O3-mini: In additional news from OpenAI, the company has released a new AI “reasoning” model called o3-mini, following a preview held last December. While not the most potent of OpenAI’s models, o3-mini offers enhanced efficiency and quicker responses.

EU Takes Action: As of this past Sunday, EU regulators now possess the authority to ban AI systems recognized as presenting “unacceptable risks.” This includes AI employed for social scoring and subliminal advertising purposes.

Theatrical Reflection on AI: A new play touching on AI “doom” culture has debuted, loosely inspired by Sam Altman’s recent removal from his position at OpenAI in November 2023. Colleagues Dominic and Rebecca share their insights after attending its premiere.

Tech Innovations for Agriculture: Google’s X “moonshot factory” introduced its latest initiative this week. Heritable Agriculture is a data and machine learning-focused startup that aims to revolutionize how crops are cultivated.

Research Paper of the Week

Models focused on reasoning are generally superior to standard AI systems in tackling problems, particularly in science and mathematics. However, they are not flawless.

A recent study from Tencent researchers delves into the phenomenon of “underthinking” in reasoning models, where these systems prematurely abandon potentially fruitful lines of thought. The study found that “underthinking” occurs more frequently with complex problems, causing models to switch between reasoning paths without reaching conclusions.

The research team proposed a solution involving a “thought-switching penalty” aimed at encouraging models to thoroughly explore each reasoning strand before considering alternatives, thereby increasing their accuracy.

Model of the Week

A collective of researchers supported by ByteDance, the parent company of TikTok, and Chinese AI firm Moonshot has introduced a new open model that can generate relatively high-quality music based on user prompts.

Named YuE, this model can create songs lasting up to several minutes, complete with vocals and musical backing. It operates under an Apache 2.0 license, allowing for unrestricted commercial use.

However, there are some drawbacks. Utilizing YuE demands a powerful GPU; for instance, generating a 30-second piece takes around six minutes on an Nvidia RTX 4090. Moreover, it remains unclear whether the model was trained on copyrighted material since its creators have not disclosed this information. Should it be confirmed that copyrighted music was included in the training set, users could potentially face intellectual property complications.

Miscellaneous

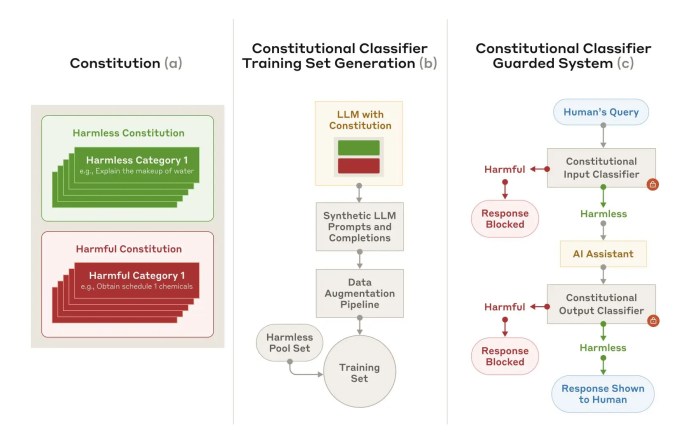

AI lab Anthropic claims to have developed a more effective method to protect against AI “jailbreaks,” which are techniques that can circumvent an AI system’s security protocols.

This method, Constitutional Classifiers, utilizes two types of AI models: an “input” classifier and an “output” classifier. The input classifier attaches prompts to a protected model that include templates detailing jailbreaks and other prohibited content, while the output classifier evaluates the likelihood of a model’s response containing harmful information.

According to Anthropic, Constitutional Classifiers can successfully filter out the “vast majority” of jailbreak attempts. Nonetheless, this approach has its drawbacks. Each query is 25% more computationally taxing, and the safeguarded model shows a 0.38% decrease in its responsiveness to innocuous inquiries.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence