Each Sunday, NPR’s Will Shortz, the crossword puzzle expert from The New York Times, engages thousands of listeners through a popular segment known as the Sunday Puzzle. Although these puzzles are crafted to be solvable without extensive prior knowledge, they often present a formidable challenge even for experienced participants.

This has led some experts to propose that these puzzles might serve as an effective gauge for assessing the problem-solving capabilities of AI.

A recent study conducted by researchers from Wellesley College, Oberlin College, the University of Texas at Austin, Northeastern University, Charles University, and the startup Cursor established an AI benchmark using challenges sourced from Sunday Puzzle episodes. The findings revealed unexpected insights, such as how reasoning models—including OpenAI’s o1—can sometimes “give up” and provide answers they acknowledge to be incorrect.

“Our goal was to create a benchmark featuring problems that are understandable to humans with just general knowledge,” stated Arjun Guha, a computer science faculty member at Northeastern and co-author of the study, in an interview with TechCrunch.

The AI sector is currently grappling with a benchmarking dilemma. Many existing tests used to assess AI models focus on skills, like advanced math and science, which may not apply to the everyday user. Moreover, several benchmarks, including recently released ones, are nearing saturation.

One of the benefits of a public radio quiz like the Sunday Puzzle is its avoidance of obscure knowledge, and its challenges are formulated in a way that prevents models from relying solely on “rote memory,” Guha explained.

“These problems are particularly challenging because meaningful progress often hinges on arriving at a solution — that’s when all the pieces fit together,” Guha remarked. “This demands a mix of insight and a methodical elimination process.”

No benchmark is without its flaws. The Sunday Puzzle is inherently U.S.-centric and available only in English. Additionally, since the quizzes are accessible to the public, there is a chance that models trained on them could “cheat,” although Guha noted he has not encountered evidence to support this.

“Every week brings new questions, and we can anticipate that the most recent challenges will be genuinely novel,” he added. “Our intention is to keep the benchmark dynamic and monitor how model performance evolves over time.”

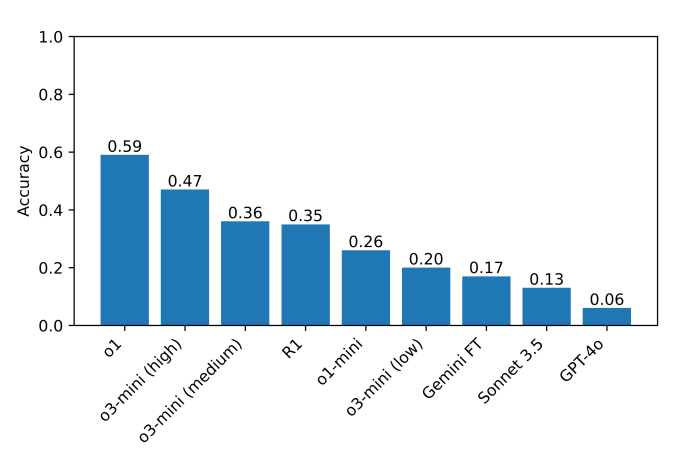

The researchers’ benchmark consists of approximately 600 Sunday Puzzle riddles, with reasoning models such as o1 and DeepSeek’s R1 significantly surpassing their counterparts. These reasoning models conduct thorough fact-checking before producing results, steering clear of some common pitfalls that typically hinder AI models. However, this thoroughness results in longer response times, usually taking seconds to minutes more.

At least one model, DeepSeek’s R1, admits to providing incorrect solutions to certain Sunday Puzzle questions. R1 explicitly states “I give up,” followed by an answer that appears to be chosen at random — a response many humans might sympathize with.

The models make other perplexing choices, such as delivering an incorrect answer only to immediately retract it, seeking a better one but failing once more. They can also become stuck in a state of perpetual “thinking,” offering nonsensical justifications for their answers, or they might arrive at a correct answer swiftly but then inexplicably explore alternative answers.

“In tough problems, R1 literally expresses that it’s feeling ‘frustrated,’” Guha noted. “It’s amusing to witness a model mimicking what a human might express. The impact of this ‘frustration’ on reasoning quality in models remains to be seen.”

Currently, the leading model on this benchmark is o1, achieving a score of 59%, while the new o3-mini optimized for high “reasoning effort” follows at 47%. (R1 scored 35%.) The researchers aim to expand their assessments to additional reasoning models to pinpoint areas for potential enhancement.

“Exceptional reasoning ability doesn’t require a PhD, so we should be able to create reasoning benchmarks that are accessible without such qualifications,” Guha emphasized. “A more inclusive benchmark allows a broader pool of researchers to understand and interpret the findings, potentially leading to improved solutions down the line. Furthermore, as advanced models are increasingly integrated into various facets of everyday life, it is crucial that the general public can intuitively grasp what these models can and cannot achieve.”

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence