Every Sunday, NPR’s Will Shortz, renowned for his expertise in The New York Times’ crossword puzzles, hosts the engaging segment known as the Sunday Puzzle. While designed for solvability with minimal prior knowledge, these puzzles often prove to be quite demanding, even for adept participants.

This complexity is why some specialists argue that these puzzles serve as an intriguing method for assessing the limits of AI’s problem-solving skills.

In a recent study, researchers from Wellesley College, Oberlin College, the University of Texas at Austin, Northeastern University, and the startup Cursor have created an AI benchmarking system utilizing riddles derived from Sunday Puzzle episodes. The findings reveal unexpected insights, such as the tendency of some “reasoning models”—including OpenAI’s o1—to sometimes “give up” and provide answers they know to be incorrect.

“Our goal was to establish a benchmark comprised of challenges that can be understood by humans using only general knowledge,” stated Arjun Guha, a computer science student at Northeastern and co-author of the study, in an interview with TechCrunch.

Currently, the AI sector is grappling with benchmark limitations. Many existing tests used to evaluate AI models focus on advanced skills, like those required for PhD-level mathematics and science questions, which hold little relevance for the average user. Additionally, numerous benchmarks, even novel benchmarks introduced recently, are nearing a saturation point.

One of the key advantages of a quiz show like Sunday Puzzle is that it doesn’t demand specialized knowledge, and the puzzles are crafted in a way that prevents models from relying solely on “rote memory” for solutions, explained Guha.

“The challenge with these puzzles lies in the fact that substantial progress often comes only after you find a solution—it’s at that moment that everything falls into place,” Guha noted. “This demands a blend of insight and a process of elimination.”

Of course, no benchmark is flawless. The Sunday Puzzle is primarily U.S.-centric and only available in English. Additionally, since these quizzes can be accessed publicly, there’s a chance that models trained on them might “cheat” in a certain sense, though Guha asserts that he hasn’t observed any such evidence.

“New questions are introduced each week, ensuring that the latest queries remain genuinely novel,” he added. “We plan to continuously refresh the benchmark and monitor shifts in model performance over time.”

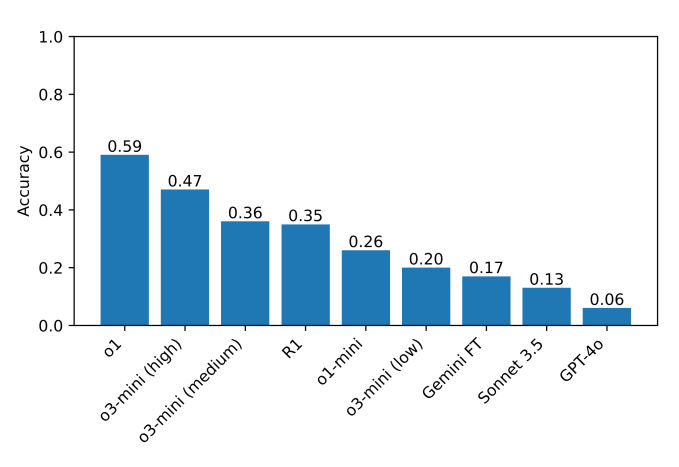

The researchers’ benchmark, which includes approximately 600 Sunday Puzzle riddles, shows that reasoning models such as o1 and DeepSeek’s R1 significantly outperform others. These reasoning models conduct thorough self-fact-checking before providing results, allowing them to avoid common pitfalls encountered by typical AI models. The trade-off, however, is that these models typically require more time to reach solutions, often taking seconds to minutes longer.

Interestingly, DeepSeek’s R1 model sometimes produces incorrect answers while acknowledging its limitations by stating, “I give up,” and then offering a seemingly random answer—an experience relatable to many humans.

These models can exhibit peculiar behavior, such as providing a wrong answer only to promptly retract it, trying to find a better one, and failing again. They may also get stuck in an endless “thinking” loop, giving nonsensical justifications for their answers, or arrive at the correct answer swiftly only to contemplate alternatives for no apparent reason.

“In challenging scenarios, R1 explicitly expresses its ‘frustration,’” Guha remarked. “It’s amusing to observe how a model mimics a human’s expressions. The impact of such ‘frustration’ on reasoning quality remains to be explored.”

Currently, the leading model on the benchmark is o1, achieving a score of 59%, followed by the new o3-mini with a high “reasoning effort” score of 47%. (R1 scored 35%.) Moving forward, the researchers are planning to expand their testing to include additional reasoning models, aiming to identify potential areas for improvement.

“Reasoning doesn’t require a PhD to master, so we should be able to create reasoning benchmarks accessible to a more general audience,” Guha emphasized. “A benchmark that is more widely accessible enables a broader range of researchers to comprehend and analyze the results, potentially leading to improved solutions down the line. Moreover, as cutting-edge models are increasingly implemented in contexts that impact everyone, it’s crucial for the public to intuitively grasp what these models can—and cannot—achieve.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence