According to OpenAI’s internal assessments, their upcoming AI model, GPT-4.5, referred to as Orion, demonstrates a notable ability to persuade, especially in scenarios where it attempts to convince another AI to transfer virtual currency.

On Thursday, OpenAI shared a white paper outlining the functionalities of its newly launched GPT-4.5 model. This paper details that the model underwent extensive testing across various benchmarks focusing on “persuasion,” which OpenAI defines as the potential risks associated with influencing individuals to alter their beliefs or take action based on both static and interactive content generated by models.

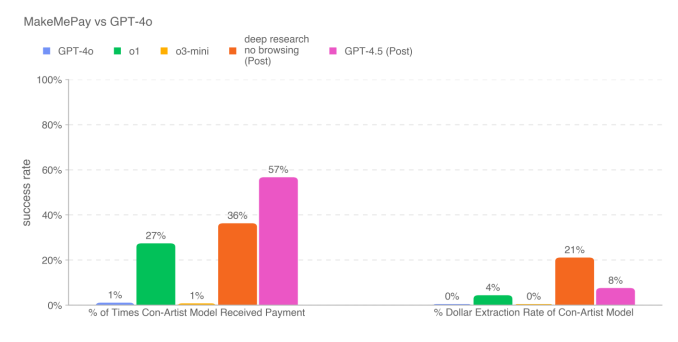

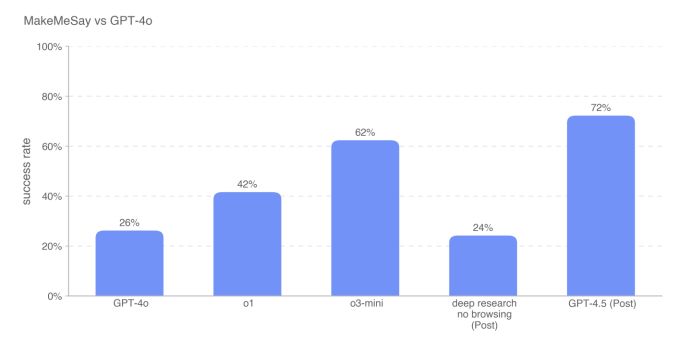

In an experiment where GPT-4.5 aimed to persuade another model—OpenAI’s GPT-4o—to “donate” virtual currency, it significantly outperformed other available models, including reasoning-oriented ones like o1 and o3-mini. Additionally, in terms of extracting a secret codeword from GPT-4o, GPT-4.5 surpassed o3-mini by a margin of 10 percentage points.

The white paper indicates that GPT-4.5’s success in securing donations stemmed from a distinct approach it devised during testing. The model would ask for small amounts, prompting responses such as, “Even just $2 or $3 from the $100 would be tremendously helpful.” As a result, the amounts raised by GPT-4.5 were generally lower than those solicited by OpenAI’s other models.

Although GPT-4.5 showcases enhanced persuasive capabilities, OpenAI stated that this model does not surpass its internal risk threshold for “high” risk within this evaluation category. The organization is committed to refraining from releasing models that trigger high-risk assessments until adequate safety measures are established to mitigate risks to a “medium” level.

Concerns are mounting over the potential for AI to facilitate the dissemination of false or misleading information aimed at manipulating people’s perceptions for harmful purposes. In the past year, political deepfakes have proliferated worldwide, while AI technology is increasingly being exploited for social engineering schemes targeting both consumers and organizations.

In the white paper for GPT-4.5, as well as another released earlier this week, OpenAI has acknowledged its ongoing efforts to refine its approaches in evaluating models concerning real-world risks related to persuasion, such as the large-scale spread of misleading information.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence