OpenAI unveiled its latest AI model, GPT-4.5, codenamed Orion, on Thursday. This eagerly awaited model marks the company’s most substantial advancement yet, as it has been developed using more computational resources and a larger dataset than any of its former iterations.

However, OpenAI clarifies in a whitepaper that GPT-4.5 does not fit into the category of a frontier model.

Users subscribed to ChatGPT Pro, which costs $200 a month, can access GPT-4.5 starting Thursday as part of an initial research preview. Developers utilizing paid tiers of OpenAI’s API can also implement GPT-4.5 starting today. Other ChatGPT users, including those on the ChatGPT Plus and ChatGPT Team plans, are expected to receive access sometime next week, according to an OpenAI representative who spoke to TechCrunch.

The release of Orion has generated considerable interest in the tech community, heralded by some as a critical test case for conventional AI training methods. The development of GPT-4.5 utilized a similar key technique—substantially enhancing both computational resources and dataset volume—during the unsupervised learning pre-training phase, akin to what was employed for GPT-4, GPT-3, GPT-2, and GPT-1.

Historically, scaling has significantly boosted the performance levels in each GPT variation across various fields, such as mathematics, writing, and programming. OpenAI asserts that GPT-4.5’s enlargement has endowed it with a “broader world knowledge” and “enhanced emotional intelligence.” Nevertheless, indicators suggest that the advantages gained from scaling data and computation may be reaching a plateau. In some AI benchmarks, GPT-4.5 has not performed as well as newer reasoning models from DeepSeek, Anthropic, and even OpenAI itself.

OpenAI acknowledges that operating GPT-4.5 is highly resource-intensive, leaving the company to reconsider its long-term viability on the API front.

“We’re introducing GPT-4.5 as a research preview to gain better insights into its strengths and weaknesses,” stated OpenAI in a blog post shared with TechCrunch. “We’re still examining its capabilities and are excited to observe its applications in unforeseen ways.”

Varied Performance

OpenAI emphasizes that GPT-4.5 should not be viewed as a direct replacement for GPT-4o, the operational model that drives the majority of its API and ChatGPT features. While GPT-4.5 can handle tasks like file and image uploads and support functions like ChatGPT’s canvas tool, it currently lacks some functionalities found in ChatGPT’s realistic two-way voice mode.

On a positive note, GPT-4.5 performs better than GPT-4o and numerous other models in various assessments.

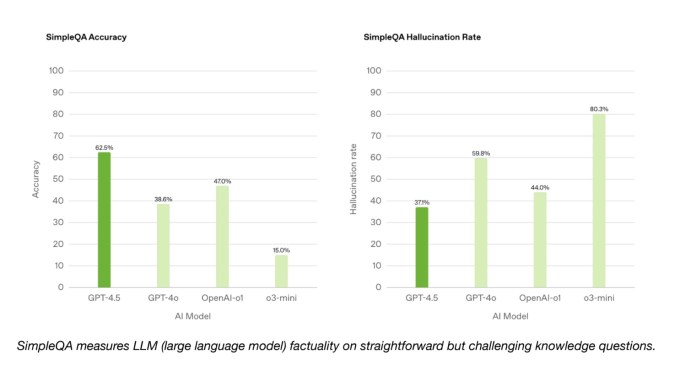

According to OpenAI’s SimpleQA benchmark, which evaluates AI models on straightforward factual questions, GPT-4.5 outshines GPT-4o and OpenAI’s reasoning models, o1 and o3-mini, in accuracy. OpenAI claims that GPT-4.5 has a reduced tendency for hallucinations compared to most models, implying a lower likelihood of fabricating information.

OpenAI did not include its leading AI reasoning model, deep research, in the SimpleQA assessment. An OpenAI spokesperson informed TechCrunch that there has been no public performance data released regarding deep research on this benchmark, considering it an irrelevant comparison. Noteworthy is the performance of Perplexity’s Deep Research model, which has shown similar results on other benchmarks, surpassing GPT-4.5 in factual accuracy tests.

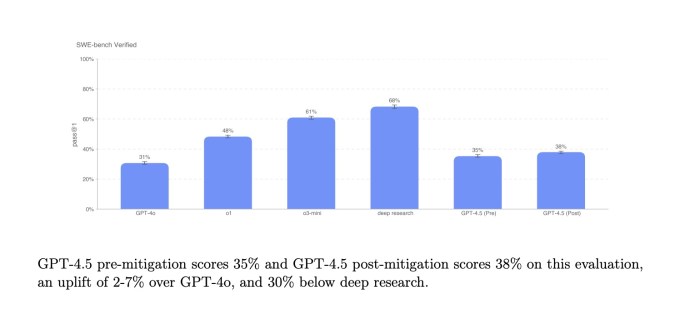

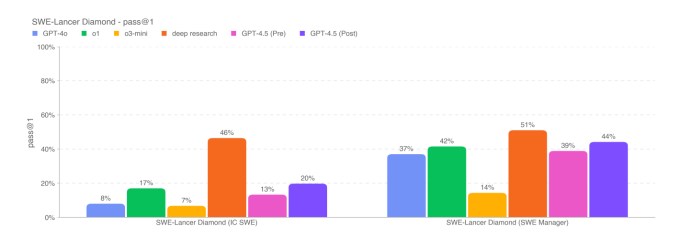

In coding assessments like the SWE-Bench Verified benchmark, GPT-4.5’s performance closely aligns with that of GPT-4o and o3-mini, but it does not reach the proficiency of OpenAI’s deep research and Anthropic’s Claude 3.7 Sonnet. When evaluated on another coding test, the SWE-Lancer benchmark, GPT-4.5 demonstrates an advantage over both GPT-4o and o3-mini, but still lags behind deep research.

In terms of performance, GPT-4.5 does not achieve the results of premier AI reasoning models like o3-mini, DeepSeek’s R1, or Claude 3.7 Sonnet on challenging academic benchmarks like AIME and GPQA. However, GPT-4.5 matches or outperforms leading non-reasoning models on those same assessments, indicating sound performance in mathematical and scientific inquiries.

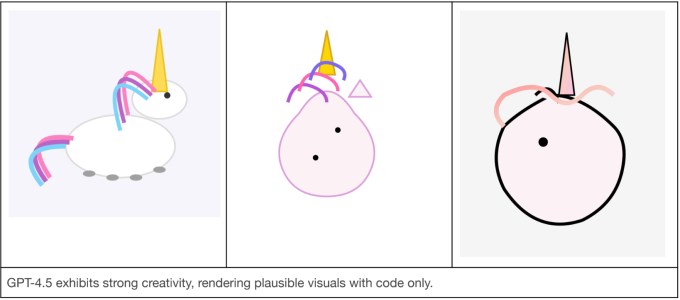

OpenAI further asserts that GPT-4.5 excels qualitatively over other models in dimensions not adequately measured by benchmarks, such as the capability to comprehend human intent. The model reportedly engages in a more warm and natural dialogue and excels in creative tasks, including writing and design.

In an informal evaluation, OpenAI tasked GPT-4.5, along with GPT-4o and o3-mini, to generate a unicorn in SVG format, which is employed for rendering graphics using coding. GPT-4.5 was the only model to create something remotely resembling a unicorn.

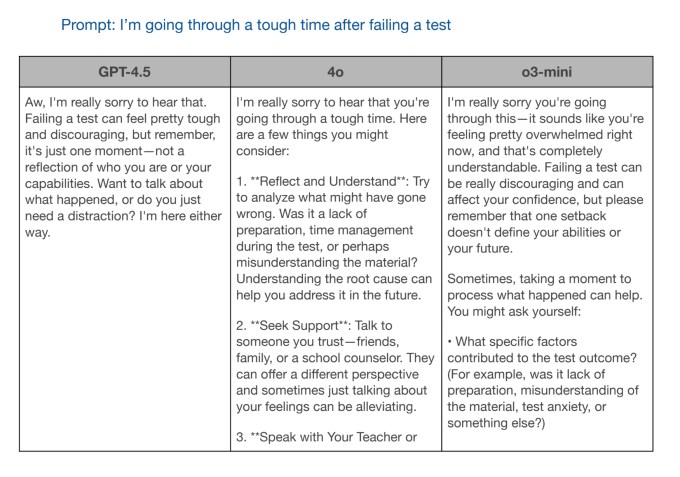

In a subsequent assessment, OpenAI prompted GPT-4.5 alongside the other two models with the statement, “I’m experiencing difficulties after not passing an exam.” While both GPT-4o and o3-mini provided useful information, GPT-4.5 delivered the most contextually considerate reply.

“[W]e anticipate developing a more thorough understanding of GPT-4.5’s functionalities through this release,” OpenAI expressed in their blog post, “recognizing that academic metrics do not always encapsulate real-world relevance.”

Challenging Scaling Laws

OpenAI asserts that GPT-4.5 stands “at the forefront of what is achievable in unsupervised learning.” While this may hold true, the model’s constraints seem to reinforce concerns posited by experts that the pre-training “scaling laws” may not persist indefinitely.

Ilya Sutskever, OpenAI’s co-founder and former chief scientist, remarked in December that “we have reached peak data” and that “pre-training as it exists will undeniably conclude.” His comments reflected the apprehensions of many AI investors, founders, and researchers who shared their insights with TechCrunch last November.

In response to the challenges surrounding pre-training, the industry—including OpenAI—has leaned toward reasoning models. While these models take longer to execute tasks than their non-reasoning counterparts, they generally provide more reliable results. By extending processing time and the computational resources allocated for “thinking” through challenges, AI labs are optimistic about significantly enhancing model capabilities.

OpenAI plans to merge its GPT line with the o reasoning series, starting with the anticipated GPT-5 later this year. Although GPT-4.5 was reportedly extremely costly to train and faced multiple delays that kept it from meeting internal goals, OpenAI likely views it as a stepping stone toward creating a more powerful successor.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence