The National Institute of Standards and Technology (NIST), an agency of the U.S. Department of Commerce tasked with the development and testing of technological solutions for the U.S. government, commercial entities, and the public at large, has unveiled an updated version of its testbed. This tool is specifically designed to assess the potential degradation of AI systems due to malevolent attacks, with a focus on those that tamper with the training data of AI models.

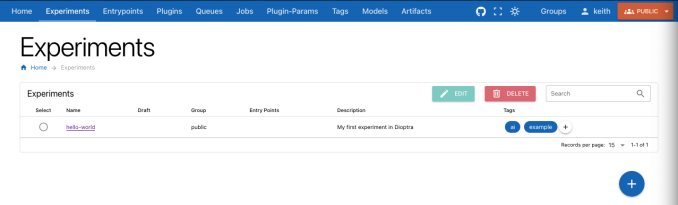

Named Dioptra, after the ancient instrument used in astronomy and surveying, this web-based, open-source, and modular tool was initially introduced in 2022. It aims to assist organizations in the process of training AI models — as well as end-users — in evaluating, understanding, and documenting vulnerabilities within AI systems. Dioptra offers facilities for benchmarking and insightful explorations of models, as affirmed by NIST, besides availing a unified framework for subjecting models to hypothetical threats within a controlled “red-teaming” scenario.

“Assessing the resilience of machine learning models against adversarial intrusions stands as one of Dioptra’s objectives,” mentioned NIST in a press release. “The freely available open-source software could empower the broader community, including governmental bodies and small to mid-sized enterprises, to conduct thorough assessments, hence validating AI developers’ assertions regarding the robustness of their systems.”

Dioptra’s introduction came in tandem with publications from NIST and the newly formed NIST AI Safety Institute. These documents outline strategies for curtailing certain AI threats, such as its exploitation for creating nonconsensual explicit content. This move aligns with the UK-based AI Safety Institute’s launch of Inspect, a toolkit with similar objectives regarding model evaluation and safety. The US and UK continue to work together in advancing testing methodologies for AI models, a collaboration highlighted at the AI Safety Summit at Bletchley Park in November of the previous year.

Furthermore, Dioptra is a direct outcome of President Joe Biden’s executive mandate on AI, requiring NIST’s involvement in testing AI systems. This order also sets forth standards for AI safety and security, obligating developers (e.g., Apple) to report and share outcomes from all safety evaluations before public deployment of their models.

As discussed previously, establishing benchmarks for AI is challenging because the intricacies of today’s most advanced AI models remain undisclosed by their creators. A recent report from the Ada Lovelace Institute, a research entity in the UK focusing on AI, suggests that evaluations alone may not suffice in gauging the real-world safety of AI systems, given that current regulations permit AI vendors to selectively perform these evaluations.

NIST acknowledges that Dioptra might not eliminate all risks associated with models, yet suggests it offers valuable insights into potential attacks that could impair an AI system’s functioning and measures the extent of such disruptions.

Nonetheless, Dioptra’s usability is somewhat limited as it presently only supports models available for local download and use, like those from Meta’s expanding Llama family. Models that are accessible solely via API, such as OpenAI’s GPT-4o, are currently incompatible, pending future updates.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence