Observing the legendary ouroboros leads to a natural skepticism about its viability—its representation of self-consumption, though symbolically rich, seems practically implausible. This metaphor finds resonance in the realm of artificial intelligence, where, as revealed in recent research, AI might face the danger known as “model collapse” when subjected to iterative training on self-generated data.

Research findings appearing in the esteemed journal Nature, led by Ilia Shumailov of Oxford, alongside British and Canadian collaborators, demonstrate that modern machine learning frameworks are intrinsically susceptible to what they term “model collapse.” The researchers elaborate in their introductory segment:

Our findings highlight that indiscriminate learning from data procured by other models culminates in “model collapse” — a progressive detriment where, over intervals, models deviate from the authentic data distribution…

Understanding this phenomenon requires a grasp of its foundational mechanics and implications.

At their core, AI models function as systems engineered for pattern recognition: They assimilate patterns in their training data, thereafter aligning queries to these learned patterns, effectively bridging to the most plausible subsequent points. This holds true irrespective of the query’s nature, be it a culinary recipe or a chronological list of U.S. presidents by age at inauguration. The logic extends similarly to image generation, albeit with nuanced differences.

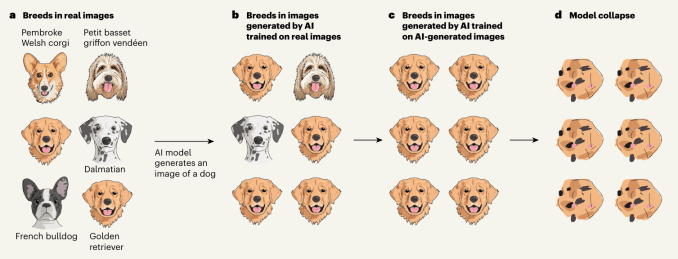

However, these models inherently bias towards generating the most frequently observed outputs. Therefore, a query for a snickerdoodle recipe yields a conventional variant, and a request for a canine image is likely to return a common breed such as a golden retriever or Labrador.

This propensity becomes particularly consequential as AI-generated content begins to saturate the web, with newer AI systems ingesting and learning from this progressively homogenized content. Consequently, this repetitious exposure skews their perception of diversity, making them more likely to replicate predominant trends, such as the overrepresentation of golden retrievers.

A visual representation, accompanying an article in Nature, elaborates on this process:

Similar redundancy issues affect language models and others that tend to prioritize the most abundant data in their training sets for generating responses. It wasn’t initially problematic until confronting the sheer volume of AI-generated material proliferating over the web.

In essence, if models persist in recycling each other’s data, possibly without discernment, this will likely lead to a gradual degradation and dumbing down, culminating in model collapse. The research team provides a wealth of examples and potential countermeasures, yet they also hint at the inevitability of this phenomenon, at least in a theoretical sense.

While experimental outcomes may vary, the potential for such occurrences should alarm all stakeholders within the AI domain. The diversity and depth of the training dataset are increasingly recognized as pivotal to a model’s quality. Yet, if the generation of new data hastens model collapse, could this impose intrinsic limitations on the capabilities of contemporary AI? Should such an eventuality manifest, identifying and counteracting it poses significant challenges.

Nonetheless, there probably exists viable strategies to avert or diminish these risks, though this does not entirely mitigate the underlying concerns.

Implementing qualitative and quantitative benchmarks for data sourcing and diversity would be beneficial, yet the adoption of standardized measures remains distant. Embedding watermarks in AI-generated content might offer a preventive measure against its reuse by other AI systems, but effective methods for such markers, particularly in images, are yet to be universally established.

Moreover, the competitive landscape may deter entities from divulging such valuable insights, preferring instead to monopolize original and human-derived data to secure a ‘first mover advantage.’

Taking model collapse seriously is imperative to preserve the benefits derived from large-scale web-scraped data training. The value tied to authentic human interaction data will surge, especially against the backdrop of content generated by Large Language Models (LLMs) dominating internet-sourced datasets.

… the challenge of training newer LLM versions may intensify without access to pre-technological adoption web data or directly sourced human-generated data at scale.

This adds yet another layer to the constellation of critical challenges facing AI models, raising pertinent concerns regarding whether current methodologies are sustainable for fostering the superintelligence of the future.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence