OpenAI’s ChatGPT platform appears to be less energy-intensive than previously thought. However, its energy demand is influenced by usage patterns and the specific AI models employed for query responses, as revealed in a new study.

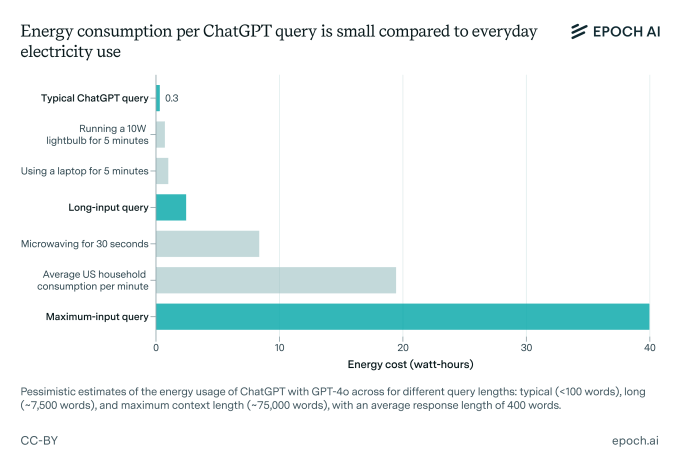

A new study conducted by Epoch AI, a nonprofit organization dedicated to AI research, sought to estimate the energy consumption of a standard ChatGPT request. Interestingly, a frequently cited figure suggests that answering a single question with ChatGPT consumes about 3 watt-hours, which is around ten times the energy used by a Google search.

Epoch AI argues that this estimate is inflated.

By examining OpenAI’s most recent standard model, GPT-4o, Epoch found that the typical query sent to ChatGPT uses approximately 0.3 watt-hours—less energy than many common appliances.

“When compared to regular home appliances or essential activities like heating, cooling, or driving, energy consumption isn’t particularly significant,” stated Joshua You, the data analyst at Epoch who spearheaded the investigation, in an interview with TechCrunch.

The energy demands of AI—and its broader environmental impacts—have become hotly debated topics as AI companies rapidly ramp up their infrastructure. Recently, over 100 organizations issued an open letter urging the AI sector and regulatory bodies to ensure that new AI data centers do not exhaust natural resources or push utilities toward reliance on fossil fuel energy sources.

You remarked that his analysis was motivated by what he viewed as outdated findings. He noted that prior reports estimating 3 watt-hours had likely been based on misconceptions, such as the assumption that OpenAI utilized older, less efficient hardware.

“There has been a significant amount of public discussion acknowledging the future energy demands of AI, but current energy consumption hasn’t been accurately represented,” You stated. “Some of my peers recognized that the most frequently reported estimate of 3 watt-hours per query was based on somewhat outdated research and lacked rigorous analysis.”

It’s important to note that Epoch’s figure of 0.3 watt-hours is still an approximation, as OpenAI has yet to furnish the necessary specifics for precise calculations.

Additionally, this analysis doesn’t factor in the extra energy required for ChatGPT functionalities like image generation or handling input data. You acknowledged that “extended input” queries, such as those with large attachments, are likely to consume more upfront energy compared to standard queries.

However, You does anticipate an increase in baseline ChatGPT energy usage moving forward.

“As AI evolves, the energy required for training will likely increase, and future AI may take on more complex and varied tasks—resulting in a higher demand for usage than we see today,” he stated.

Despite recent advancements in AI efficiency, the expected scale of AI deployment will likely require extensive and energy-demanding infrastructure investments. A report by Rand suggests that in the next couple of years, AI data centers may consume nearly all of California’s power capacity from 2022 (68 GW). By 2030, training cutting-edge models could require energy equivalent to that generated by eight nuclear reactors (8 GW), according to the same report.

ChatGPT alone services a vast and growing audience, leading to significant server demands. OpenAI and its investment partners are poised to invest billions in the establishment of new AI data centers over the coming years.

The focus of OpenAI and the broader AI sector is also shifting toward reasoning models, which generally have greater capabilities for completing tasks but need more computational resources. Unlike models such as GPT-4o, which provide near-instantaneous responses, reasoning models may process information for several seconds to minutes before generating an output—resulting in higher computational energy consumption.

“Reasoning models will increasingly tackle tasks that older models cannot manage and generate more data in the process, necessitating the establishment of additional data centers,” You added.

While OpenAI has started introducing more energy-efficient reasoning models like o3-mini, it seems doubtful that these efficiency improvements will counterbalance the rising energy requirements stemming from the extended processing time of reasoning models and the growing global demand for AI services.

For those concerned about their AI energy consumption, You recommends using applications like ChatGPT less frequently or selecting models that minimize computational needs—if such options are practical.

“Consider utilizing smaller AI models like [OpenAI’s] GPT-4o-mini,” You advised, “and try to limit their usage in situations that require heavy data processing or generation.”

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence