OpenAI has unveiled a new AI “agent” aimed at assisting users in conducting comprehensive and intricate research through ChatGPT, the organization’s AI-driven chatbot platform.

Appropriately designated as deep research.

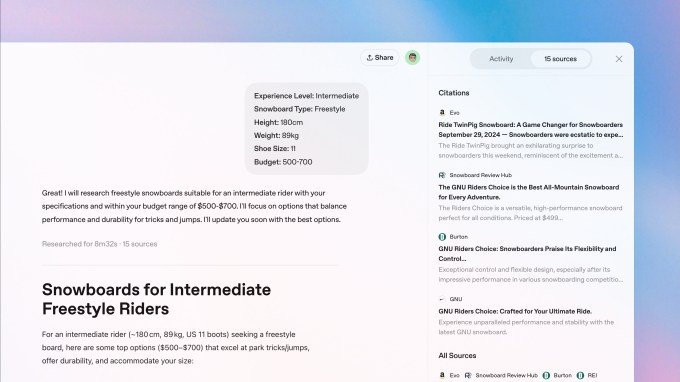

In a recent blog post shared on Sunday, OpenAI explained that this innovative feature is tailored for individuals involved in intensive knowledge work across sectors such as finance, science, policy, and engineering, where accurate and detailed research is crucial. Additionally, the company highlighted its potential benefits for those making significant purchases necessitating extensive research, such as vehicles, home appliances, and furniture.

Essentially, ChatGPT deep research is aimed at scenarios where quick responses or summaries won’t suffice; instead, it facilitates a thorough evaluation of information from various websites and sources.

OpenAI introduced deep research for ChatGPT Pro users today, allowing up to 100 queries per month, with functionalities for Plus and Team users to follow, and Enterprise support coming later. According to OpenAI, the Plus rollout is anticipated within a month, and the query limits for paid subscribers are expected to “increase significantly” soon. This launch is geo-targeted, with no specific release date for ChatGPT users in the U.K., Switzerland, and the European Economic Area provided.

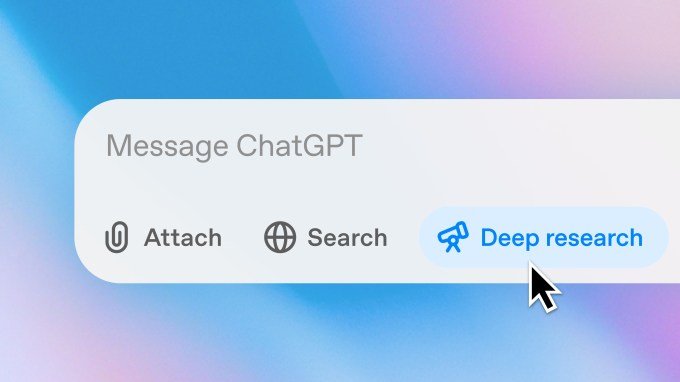

To initiate ChatGPT deep research, simply select “deep research” in the composer and enter your query, with the ability to attach files or spreadsheets. Currently, this feature is accessible only through the web, but mobile and desktop app integration is expected later in the month. Answers can take between five to thirty minutes to complete, and notifications will inform you once the search concludes.

Presently, the outputs of ChatGPT deep research are text-only. However, OpenAI plans to incorporate embedded images, data visualizations, and additional “analytic” outputs in the near future. There are also plans to connect with “more specialized data sources,” including subscription-based and internal references.

A crucial question arises: how accurate is ChatGPT deep research? AI is not without flaws; it is susceptible to hallucinations and errors that could be particularly detrimental in a “deep research” context. Consequently, OpenAI has committed to ensuring that each output from ChatGPT deep research will be “thoroughly documented, complete with clear citations and an overview of [the] reasoning, making it straightforward to reference and verify the information.”

The effectiveness of these safeguards in addressing AI inaccuracies remains to be seen. OpenAI’s AI-driven web search function, ChatGPT Search, has previously produced errors and inaccurate responses. Tests conducted by TechCrunch revealed that ChatGPT Search yielded less effective results compared to Google Search for certain inquiries.

To enhance the accuracy of deep research, OpenAI is utilizing a specialized version of its recently introduced o3 “reasoning” AI model, which has undergone reinforcement learning on “real-world tasks necessitating browser and Python tool usage.” This approach employs trial and error methods to instruct the model towards achieving specific objectives, rewarding it as it gets closer to those goals to improve its performance.

OpenAI asserts that this variant of the o3 model is “optimized for web browsing and data analysis,” emphasizing that it can “leverage reasoning to search, interpret, and analyze vast amounts of text, images, and PDFs found online, adjusting as necessary based on the information it encounters.” The model is also capable of browsing user-uploaded files and can plot and modify graphs using [a Python] tool, embedding both generated graphs and website images in its responses, along with citing specific sentences or passages from its sources.

OpenAI reports that they evaluated ChatGPT deep research using Humanity’s Last Exam, a test featuring over 3,000 advanced questions in various academic disciplines. The underlying o3 model powering deep research achieved an accuracy rate of 26.6%, which may appear as a failing score, but the exam was intentionally designed to present greater challenges than other benchmarks to stay ahead of model evolution. OpenAI disclosed that the deep research o3 model surpassed other competitors such as Gemini Thinking (6.2%), Grok-2 (3.8%), and OpenAI’s own GPT-4o (3.3%).

Nonetheless, OpenAI acknowledges that ChatGPT deep research has its limitations, including occasional inaccuracies and misinterpretations. The deep research feature may find it difficult to differentiate between credible information and mere speculation, and it often does not express uncertainty when it should — formatting errors in reports and citations may also occur.

For those concerned about the effects of generative AI on learners or anyone seeking information online, this type of detailed, well-cited output may be more appealing than a simple chatbot summary devoid of citations. However, it remains to be seen whether most users will engage in thorough analysis and verification of the output, or if they will merely treat it as a polished text for copy-pasting.

Interestingly, Google had recently introduced a comparable AI feature with the same name less than two months ago.

TechCrunch offers an AI-driven newsletter! Sign up here to receive it in your inbox every Wednesday.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence