How frequently does the character “r” manifest in the term “strawberry”? AI innovations such as GPT-4o and Claude reveal it occurs twice.

Sophisticated language models are adept at composing narratives and solving mathematical problems in mere moments. They process vast amounts of data quicker than the time it takes to flip open a book. However, these advanced AI entities occasionally err in ways that garner widespread amusement online, comforting us with the thought that perhaps the era of AI supremacy isn’t as imminent as feared.

The struggle of extensive language models to grasp basic concepts of alphabets and phonetics reflects a broader notion we often overlook: These systems lack consciousness. They don’t process thoughts as humans do. They neither embody humanity nor mirror human characteristics.

Most of these models are founded on transformer technology—a form of deep learning framework. Transformers dissect text into units called tokens, which could represent anything from entire words to single syllables or letters, varying by the model.

“These models incorporate transformer architectures, which crucially don’t actually ‘read’ text. Instead, input prompts are transformed into a coded language,” explained Matthew Guzdial, an AI scientist and assistant professor at the University of Alberta, in a conversation with TechCrunch. “For instance, the model recognizes ‘the’ as a specific encoding, yet it remains unaware of the individual letters ‘T’, ‘H’, ‘E’ that compose the word.”

This limitation arises because transformers are not designed to directly handle or produce real text. Rather, text is encoded as numerical values that the AI then uses to generate coherent responses. Consequently, although the AI might comprehend the composition of “strawberry” from “straw” and “berry”, it might not recognize the exact sequence of letters ‘s’, ‘t’, ‘r’, ‘a’, ‘w’, ‘b’, ‘e’, ‘r’, ‘r’, ‘y’ that form the word, nor specify the count of letters or “r”s within.

Addressing this challenge is non-trivial due to it being deeply ingrained in the technological framework powering these language models.

TechCrunch’s Kyle Wiggers previously explored this issue and discussed it with Sheridan Feucht, a PhD candidate at Northeastern University focusing on how LLMs interpret language.

“Determining what constitutes a ‘word’ for a language model is complex. Even if language experts could agree on an ideal set of tokens, models might still benefit from breaking down these tokens even further,” Feucht shared with TechCrunch. “The notion of a perfect tokenizer seems unattainable due to inherent ambiguities.”

This dilemma intensifies as LLMs accommodate more languages. Certain tokenization strategies might mistakenly assume that every new word is preceded by a space—a rule not applicable in languages without word separation spaces like Chinese, Japanese, and many others. A study by Yennie Jun of Google DeepMind in 2023 found that some languages require significantly more tokens than English to convey identical meanings.

“Ideally, allowing models to directly analyze characters without tokenization would be preferable, but such an approach is currently beyond the computational capabilities of transformers,” Feucht remarked.

Unlike text generators, image generators such as Midjourney and DALL-E utilize diffusion models instead of transformers. These models generate images by refining noise, trained on extensive image datasets. They excel at recreating familiar subjects like vehicles and faces but struggle with finer details like fingers or handwriting.

Asmelash Teka Hadgu, co-founder of Lesan and a DAIR Institute fellow, told TechCrunch, “In terms of image generation, models demonstrate higher efficacy with large objects like automobiles and facial representations than with intricate details such as digits or handwritten text.”

This could be attributed to these diminutive elements being less represented in the datasets, in contrast to more common themes like the typical foliage of trees. However, diffusion models which underlie image generators may have an easier time adapting to these challenges compared to transformers. For instance, enhanced training with images of hands has notably improved the portrayal of fingers in these models.

“As of the recent year, numerous models exhibited poor understanding of digit representation, paralleling text representation challenges,” Guzdial elaborated. “They’ve shown improvement in localized accuracy, so while an image may feature a hand with extra fingers convincingly, the overall structure still lacks coherence.”

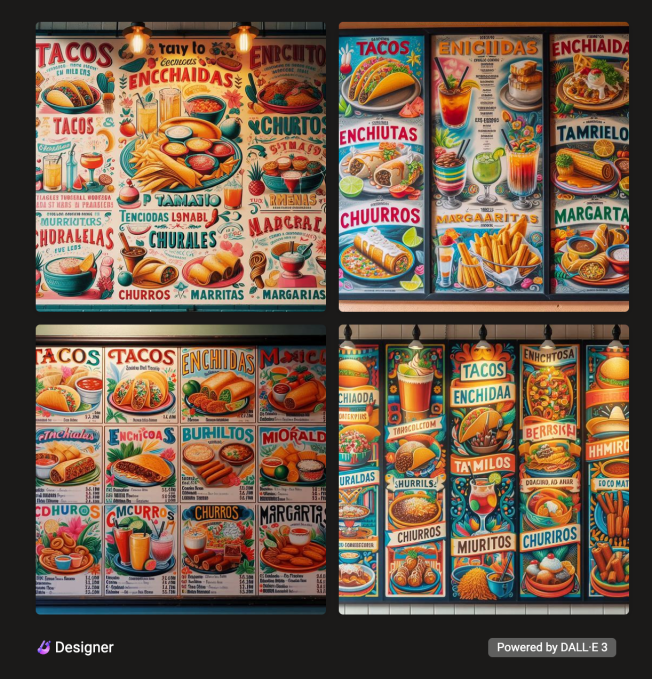

Hence, an AI-created Mexican restaurant menu might list common items like “Tacos,” but you’re equally likely to encounter nonsensical names such as “Tamilos,” “Enchidaa,” and “Burhiltos.”

As the internet buzzes with memes about AI misspelling “strawberry,” OpenAI is advancing with an AI initiative codenamed Strawberry, intended to enhance reasoning abilities. Despite the explosion of LLMs, the scarcity of training data limits their accuracy. Reportedly, Strawberry excels in generating synthetic data to refine the efficiency of OpenAI’s models, with capabilities to tackle complex word puzzles and unseen mathematical problems as per reports from The Information.

Simultaneously, Google DeepMind has recently introduced advanced AI systems such as AlphaProof and AlphaGeometry 2, tailored for formal mathematical reasoning, achieving commendable success in the International Math Olympiad.

Interestingly, the spread of memes poking fun at AI’s spelling challenges coincides with developing reports on OpenAI’s Strawberry. OpenAI’s CEO Sam Altman humorously highlighted his notable strawberry harvest in his garden, linking it back to the ongoing conversation.

Compiled by Techarena.au.

Fanpage: TechArena.au

Watch more about AI – Artificial Intelligence