Elon Musk has long promoted Dojo—a pinnacle AI supercomputer aimed to anchor Tesla’s AI endeavors. Musk emphasized the company plans to intensify focus on Dojo, especially with the upcoming revelation of its robotaxi in October.

But, what is Dojo, and why does it hold such significance for Tesla’s future?

Simply put, Tesla crafted Dojo as a high-caliber supercomputer, specifically to advance its “Full Self-Driving” (FSD) technology. By enhancing Dojo, Tesla strides closer to achieving complete self-navigation capability, crucial for the success of their proposed robotaxi. Currently, about 2 million Tesla vehicles are incorporated with FSD, which can execute several driving operations autonomously, yet still necessitates a driver’s oversight.

Despite a postponement from August to October for its robotaxi unveiling, Tesla remains steadfast in its pursuit of self-sufficiency, as indicated by Musk and internal sources.

Investments in AI and Dojo are expected to surge as Tesla persists in its quest.

The Origin Story of Tesla’s Dojo

Musk envisions Tesla transcending the automobile and renewable energy product industry to become a leading AI innovator, mastering autonomous vehicles through simulating human cognition.

While other firms employ a mix of sensory inputs like lidar, radar, and cameras along with high-definition maps for vehicle localization, Tesla ventures into autonomy with a singular reliance on camera-fed visual data. This strategy, empowered by advanced neural networks, aims for real-time driving decision-making.

As told by Tesla’s ex-chief of AI, Andrej Karpathy, in 2021, the company’s mission is akin to constructing a “synthetic animal” from scratch, showcasing Musk’s initial teasers of Dojo in 2019 before its formal announcement.

Contrary to Tesla, other entities like Alphabet’s Waymo have commercialized Level 4 autonomous vehicles using a traditional blend of technology, a milestone Tesla has yet to achieve sans driver supervision.

Roughly 1.8 million individuals have subscribed to Tesla’s FSD feature, despite its lofty cost, buoyed by the promise that Dojo’s enhancements will be distributed via updates. The volume of FSD adoption has allowed Tesla to accumulate a vast amount of driving data vital for training and refining its autonomy algorithms.

However, some experts caution against the belief that sheer data volume equates to smarter models, highlighting economic and data relevance constraints.

“We’re approaching a juncture where the cost of data accumulation could become prohibitively high, and we might max out on the data useful for training these models,” shared Anand Raghunathan, a Silicon Valley professor at Purdue University with TechCrunch. “Just adding more data doesn’t inherently enrich the model’s knowledge base unless that data offers new insights, which is what matters in the end.”

Despite these concerns, the trend towards more data, requiring significant computational power for processing, is expected to continue for the foreseeable future. Enter, Dojo – the computational behemoth designed for this very purpose.

Explaining Supercomputers

Dojo symbolizes Tesla’s leap into supercomputing, dedicated to AI and particularly FSD training, borrowing its name from martial arts’ training grounds.

Supercomputers combine thousands of compute nodes, each with its CPU and GPU, to manage and perform complex parallel tasks. These GPUs are pivotal in machine learning, facilitating the training of AI models like FSD in simulated environments.

Tesla too engages Nvidia GPUs for its AI development endeavors (detailing to follow).

The Necessity of a Supercomputer for Tesla

Tesla’s decision to pursue a vision-based autonomous driving system underpins the need for immense computing power. The AI needs to interpret vast data caches from the road, identifying and acting upon countless driving scenarios real-time, analogous to human optical and cognitive functions.

This requires not just immediate processing of visual data but also extensive simulation-based training of Tesla’s neural networks.

Tesla has shared that its vehicles running FSD version 12 have already covered 300 billion miles, as of May 2024, illustrating the scale of data gathered.

Although currently reliant on Nvidia for its supercomputer needs, Tesla aspires to reduce dependency due to the high cost and logistical hurdles associated with Nvidia’s top-tier chips. Instead, Tesla’s AI team embarked on designing bespoke hardware potentially more efficient and cost-effective for AI computations.

The heart of this initiative is the D1 chip, custom-engineered by Tesla to streamline AI model training.

Insights on Tesla’s Chips

Emulating Apple’s philosophy, Tesla believes in a harmonized design of hardware and software. This ideology prompted its shift from conventional GPU architecture to in-house chip development for Dojo’s operations.

The reveal of the D1 chip on AI Day in 2021 marked a milestone, with mass production commencing as early as May of the following year. The Taiwan Semiconductor Manufacturing Company (TSMC) is at the helm of manufacturing these 7 nanometer chips, each housing 50 billion transistors within a 645 square millimeters footprint, underpinning its potent and efficient computing capability.

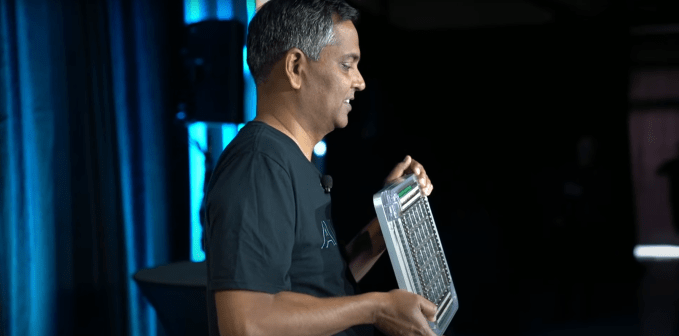

“This chip exemplifies a true machine learning powerhouse, with computation and data handling designed to run concurrently, optimized through our custom ISA tailored for AI workloads,” explained Ganesh Venkataramanan, Tesla’s prior senior director of Autopilot hardware, during the 2021 AI Day demonstration.

However, the D1 chip, despite its prowess, still trails behind Nvidia’s A100 in sheer computational ability. The A100, also fabricated by TSMC via a 7 nanometer process, boasts 54 billion transistors across a larger die area, granting it a slight performance edge over Tesla’s D1.

To amplify computing capacity and efficiency, Tesla interconnected 25 D1 chips into a singular tile, effectively forming a cohesive computational unit. This assembly facilitates a collective compute capability of 9 petaflops and a bandwidth of 36 terabytes per second, packing all essential components for power, cooling, and data transmission within each tile. Consequently, a single tile embodies a compact supercomputer, comprising 25 interconnected mini-computers. A Dojo system escalates by integrating multiple tiles into racks, cabinets, and eventually ExaPODs, culminating in a formidable supercomputing infrastructure.

Anticipating future advancements, Tesla is already strategizing over the next-generation D2 chip, envisaging a design that might incorporate an entire Dojo tile within a single silicon wafer.

While the exact quantity of D1 chips ordered and the timeline for Dojo’s full operational capacity remain undisclosed, Musk’s engagement on X reveals an ambition for a balanced hardware ecosystem, integrating both Tesla’s AI architecture and third-party solutions, including Nvidia and possibly AMD chips.

The Significance of Dojo to Tesla

Gaining autonomy over chip manufacture could eventually allow Tesla to scale its AI training capabilities affordably and efficiently, especially as collaboration with TSMC heightens and chip costs diminish.

This strategic shift might also liberate Tesla from its reliance on Nvidia’s chips, which have become increasingly expensive and challenging to procure.

During Tesla’s earnings call in the second quarter, Musk voiced concerns over the difficulty in securing Nvidia GPUs, underlining the necessity to invest more in Dojo to ensure Tesla’s training capabilities.

Despite continuing to acquire Nvidia chips for AI training, Musk disclosed on X:

“About $10B allocated for AI-related expenses at Tesla this year, approximately half is directed internally, particularly towards the Tesla-designed AI inference computer and sensors embedded in all our cars, apart from Dojo. Regarding the construction of AI training superclusters, Nvidia hardware constitutes about 2/3 of the expenditure. My estimation for Nvidia purchases by Tesla is between $3B to $4B this year.”

While Dojo embarks on a bold endeavor, Musk has occasionally hinted at the possibility of Tesla’s venture not achieving its expected outcomes.

Nonetheless, in the broader perspective, Tesla may uncover a novel business model through its AI division. Musk has mentioned that Dojo’s initial version will be devoted to Tesla’s computer vision labelling and training, pivotal for refining FSD and Optimus, Tesla’s humanoid robot, functioning. However, its immediate utility beyond these applications might be limited.

Musk envisages that future iterations of Dojo could cater to more generalized AI training purposes. However, this would necessitate a substantial reworking of existing AI software, predominantly designed for GPU compatibility, to harness Dojo’s full potential.

TechArena.au

Watch more about AI – Artificial Intelligence